Beyond the Map: Why Every Engineer Needs to Master the LRU Cache

We’ve all been there. You’re looking at a system and you realize the database is taking a beating. You’re seeing high "Random I/O," your buffer pool is thrashing, and the latency numbers are starting to creep up into the "we need to paged somebody" territory.

Your first instinct? Cache it. But "caching" isn't just throwing a HashMap at the problem. In a production system with finite memory, an unbounded map is a memory leak waiting to happen. You need a strategy to decide what stays and what goes. You need the Least Recently Used (LRU) algorithm.

The "Database Physics" of LRU

During my Masters, I spent months building a custom database engine from scratch. One of the biggest lessons from that "deep dive" into buffer management was this: Memory is a precious, finite resource, and the way you manage it defines your system's ceiling.

In a database, the buffer manager uses LRU (or a variation like LRU-K or Clock) to decide which pages to swap out to disk. If you get this wrong, your hit ratio drops, your disk I/O spikes, and your 10-hour calculation stays a 10-hour calculation instead of dropping to 3.

What exactly is an LRU Cache?

At its core, an LRU cache is a data structure that:

- Has a Fixed Capacity: It won't grow until your JVM explodes.

- Tracks Recency: Every time you access an item (a "Get") or add one (a "Put"), that item moves to the "front of the line."

- Evicts the "Cold" Data: When you hit capacity and try to add something new, the item that hasn't been touched for the longest time—the "Least Recently Used"—is kicked out.

The Architectural Trade-off: Why not just a Map?

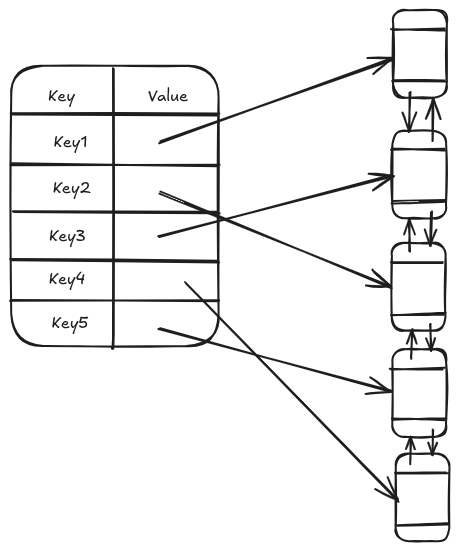

A standard HashMap gives you O(1) access, but it has no sense of order. A LinkedList gives you order, but searching it is an O(n) nightmare.

To build a Staff-level LRU, we combine them. We use a Doubly Linked List to maintain the order of recency and a HashMap to store references to the nodes in that list. This gives us the "Golden Ratio" of algorithm performance: O(1) for both Gets and Puts.

When should you actually use this?

Don't reach for an LRU for everything. Use it when:

- You have "Hot" and "Cold" data: If 80% of your users only access 20% of the data (the Pareto Principle), an LRU is incredibly effective.

- Predictable Memory Footprint: You need to guarantee that your service won't exceed a specific memory limit.

- Temporary Metadata: Storing session info, permissions, or API rate-limiting tokens.

The Implementation: A Kotlin Approach

When I'm interviewing Senior or Staff candidates, I don't just look for "working code." I look for Separation of Concerns. I want to see that the pointer arithmetic (the "how") is separated from the caching policy (the "what").

Here is the implementation I’ve been refining—it’s clean, generic, and handles the tricky "middle-node" pointer logic that trips most people up.

interface Cache<K, V> {

fun get(key: K): V?

fun put(key: K, value: V)

}

class LRUCache<K, V>(private val capacity: Int) : Cache<K, V> {

private val list = LinkedList<K, V>()

private val kvHolder = mutableMapOf<K, Node<K, V>>()

override fun get(key: K): V? {

val node: Node<K, V>? = kvHolder[key]

when {

node != null -> {

list.moveToHead(node)

return node.value

}

}

return null

}

override fun put(key: K, value: V) {

val node = kvHolder[key]

if (node != null) {

node.value = value

list.moveToHead(node)

} else {

val newNode = Node(key, value)

if (kvHolder.size >= capacity) {

val removedTail = this.list.removeTail()

kvHolder.remove(removedTail)

}

this.list.addToHead(newNode)

this.kvHolder[key] = newNode

}

}

}

class LinkedList<K, V>() {

var head: Node<K, V>? = null

var tail: Node<K, V>? = null

}

class Node<K, V>(val key: K, var value: V) {

var prev: Node<K, V>? = null

var next: Node<K, V>? = null

}

fun <K, V>LinkedList<K, V>.moveToHead(node: Node<K, V>) {

removeNode(node)

addToHead(node)

}

fun <K, V>LinkedList<K, V>.removeNode(node:Node<K, V>) {

val prevNode = node.prev

val nextNode = node.next

// Fix the "forward" pointers

if (prevNode != null) {

prevNode.next = nextNode

} else {

head = nextNode // It was the head

}

// Fix the "backward" pointers

if (nextNode != null) {

nextNode.prev = prevNode

} else {

tail = prevNode // It was the tail

}

// Crucial: Null out the deleted node's pointers to prevent memory leaks

node.prev = null

node.next = null

}

fun <K, V>LinkedList<K, V>.addToHead(node:Node<K, V>) {

if (this.head == null) {

this.head = node

this.tail = node

} else {

node.next = this.head

this.head?.prev = node

this.head = node

}

}

fun <K, V>LinkedList<K, V>.removeTail(): K? {

val currentTail = this.tail ?: return null

val key = currentTail.key

removeNode(currentTail)

return key

}

The "Staff" Details: What the code doesn't show

If you’re implementing this in a production environment, you can't just stop at the algorithm. You have to think about the Operational Reality:

- Concurrency: The code above is not thread-safe. In a multi-threaded web server, you’d want to wrap these operations in a

ReentrantReadWriteLock. Why? Becauseget()calls can happen in parallel, butput()andmoveToHead()need exclusive access. - Memory Pressure: If your values are large, consider using

SoftReference. This tells the JVM, "Hey, keep this in memory unless you're about to crash with an OOM error, then feel free to clear it." - Observability: You should be tracking your Cache Hit Ratio. If your hit ratio is 5%, your cache isn't helping; it's just adding latency and wasting memory.

Wrapping Up

Understanding the LRU cache is a rite of passage for high-level engineers. It connects the dots between low-level memory management and high-level system architecture.

Whether you're optimizing a Postgres instance or designing a new microservice, remember: The best systems aren't the ones that can hold the most data; they're the ones that know exactly which data to throw away.

Next Steps:

- How would you modify this to be LfuCache (Least Frequently Used)?

- Want to see how to implement this using a

ReentrantReadWriteLockto make it production-ready?

Let me know in the comments!

Member discussion